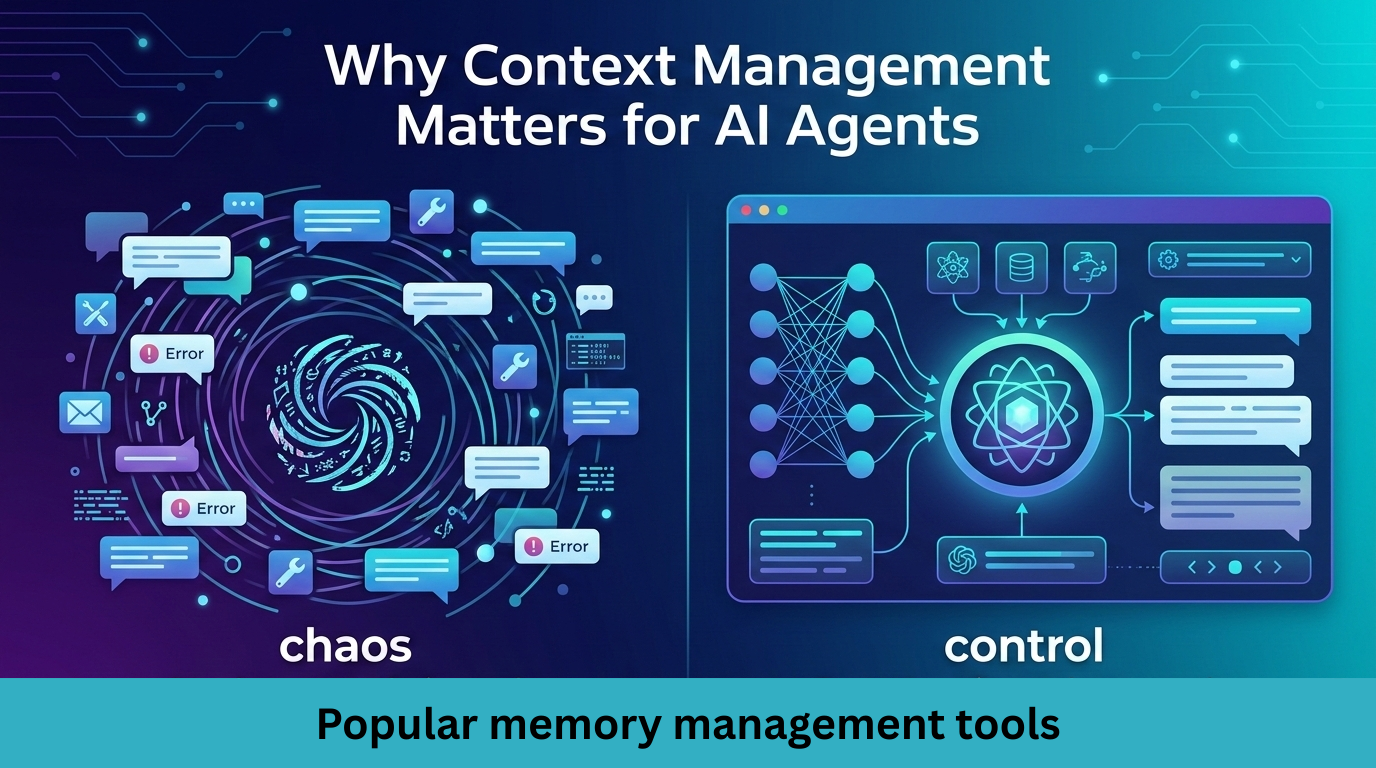

Most “AI agents” behave like stateless chatbots: they read a giant transcript, guess, and forget. This breaks down fast with tools, multi-step tasks, and long conversations.

Context engineering structures what goes into each LLM call: relevant memories, goals, constraints, and tool schemas. Without it, agents repeat work, hallucinate forgotten facts, and burn through context windows.

Memory layers solve this by:

1. Extracting durable facts from raw interactions

2. Storing them efficiently (vectors, graphs, events)

3. Recalling only relevant context per step

4. Optionally reflecting to learn higher-level patterns

Here are four popular production memory layers that solve the core agentic challenge: maintaining coherent context across long-horizon tasks, tool calls, and multi-session interactions.

-

Hindsight

Hindsight is an agent memory system with biomimetic memory banks (World facts, Experiences, Opinions, Observations) that learn continuously, eliminating RAG/graph limitations for reliable multi-session agent context.

How it works:

- Retain: Push information into memory banks; LLM extracts entities, relationships, time series, sparse/dense vectors for hybrid storage.

- Recall: 4 parallel retrievals (semantic vectors, BM25 keywords, graph/temporal links, time ranges) merged via reciprocal rank fusion + cross-encoder reranking.

- Reflect: Analyzes memories to form opinions/observations (failure patterns, strategies), writes derived memories back for learning.

- Dual banks separate world facts from agent experiences for targeted recall.

Benchmark:

91.4% accuracy on LongMemEval, outperforms GPT-4o full-context.

Link:

Website: https://hindsight.vectorize.io

GitHub: https://github.com/vectorize-io/hindsight

- Mem0

Mem0 provides personalized, continuous learning AI through multi-level memory (User/Session/Agent) that extracts structured facts/preferences from interactions for token-efficient long-term recall.

How it works:

- Extract/Add: Raw messages/tool outputs → LLM extracts memory points with embeddings/metadata by scope (user/session/agent).

- Recall/Search: Query returns top-K relevant memories as text/JSON (memory.search(query, user_id)).

- Update: Versioning, pruning, conflict resolution; graph variant adds entity relations.

- Maintains personalization across multi-turn conversations without full history.

Benchmark:

On the LOCOMO benchmark, Mem0 consistently outperforms six leading memory approaches, achieving:

- 26% higher response accuracy compared to OpenAI’s memory

- 91% lower latency compared to full-context method

- 90% savings in token usage, making memory practical and affordable at scale

Link:

Website: https://mem0.ai

GitHub: https://github.com/mem0ai/mem0

- Acontext

Acontext stores agent interactions as structured memories/skills, enabling self-evolving systems through observability, workflow extraction, and multi-agent coordination from production traces.

How it works:

- Store: Conversations, tool calls, artifacts, outcomes → persistent structured logs across sessions/agents.

- Observe: Dashboards/CLI monitor task patterns, failures, performance metrics.

- Learn: Distills successful trajectories into reusable skills/SOPs invoked as agent tools.

- Supports Anthropic/OpenAI for coordinated agent teams.

Link:

Website: https://acontext.io

GitHub: https://github.com/memodb-io/acontext

- Zep

Zep builds temporal knowledge graphs from user interactions, providing personalized context with continuous learning for AI assistants through fast, provenance-tracked fact retrieval.

How it works:

- Add: Messages/data → temporal Knowledge Graph with valid_at/invalid_at timestamps.

- Store: Contextual relationships with data provenance for state reasoning.

- Retrieve: Query relevant facts; Graphiti handles temporal graph updates.

- Python/TS/Go SDKs for enterprise integration.

Benchmark:

Zep demonstrates substantial improvements in both accuracy and latency compared to the baseline across both model variants. Using gpt-4o-mini, Zep achieved a 15.2% accuracy improvement over the baseline, while gpt-4o showed an 18.5% improvement. The reduced prompt size also led to significant latency cost reductions compared to the baseline implementations. paper

Link:

Website: https://getzep.com

GitHub: https://github.com/getzep/zep

Conclusion:

Large language models are no longer bottlenecks. Context is!

As agents move from toy demos to long horizon, tool driven, multi session workflows, memory architecture becomes the defining design choice. Vector stores alone are not enough. Full transcript prompting does not scale. And naive logging does not create learning.

There is no single winner. The right choice depends on whether your primary challenge is personalization, long term reasoning, state evolution, or multi agent coordination.

What is clear is this: future agent systems will not be defined by bigger models, but by smarter memory layers.